When data quality issues strike, they often do so silently, until it’s too late. A missed alert, a stale dataset or a schema change can break downstream reports. In today’s AI-powered environment, these slip-ups stall progress and undermine trust in critical insights.

More than just a buzzword, data observability provides real-time visibility and control, enabling you to prevent data issues before they impact business outcomes. It empowers teams to detect anomalies early, maintain trust and ensure every decision is backed by dependable information.

Let’s explore how you can combine data quality and observability into a single, proactive practice to protect your pipelines and have faster, more confident decisions.

Why ‘Fit-for-Purpose’ Data Matters in 2026

You’re about to demo a new AI-powered dashboard when you notice the metrics have flat-lined overnight. Sales suddenly display zero; questions arise, and trust evaporates. In short, data quality failure.

At First San Francisco Partners (FSFP), we define data quality as “fit for purpose.” Data must be fit for its intended purpose, whether it fuels AI, analytics or autonomous agents. Even the best-designed AI and analytics platforms are only as strong as the data feeding them.

Industry studies show the cost of bad data reaches $12.9 million per year on average. And yet, many teams are still relying on reactive cleanup instead of proactive monitoring.

As described in ‘Firefighting Management’, observability turns reactive firefighting into proactive reliability.

Data Quality vs. Data Observability – Two Sides of Trust

Think of data quality as the recipe for your dish. It lists all the ingredients you need, along with the correct measurements. Observability, on the other hand, is your kitchen thermometer, providing you with near real-time insights into whether things are heating up or burning down.

- Data Quality (DQ): Helps ensure data is accurate, complete, timely and consistent.

- Observability: Provides near real-time visibility into data pipelines, including monitoring freshness, identifying anomalies, detecting drift, tracking schema changes and detecting lineage breaks.

As stated in the Gartner Data Observability Market Guide from Monte Carlo:

“Data quality is concerned with data itself from a business context, while data observability has additional interests and concerns the system and environment that delivers that data.”

Both data quality and observability come to life through data governance, which sets the policies, roles and accountability needed to ensure your pipelines consistently deliver trusted, business-ready data.

Data quality helps you put your organization's data pieces together.

FSFP’s Six-Step Data Quality & Observability Framework

At FSFP, we use a proven six-step framework to help you establish sustainable, business-aligned practices that build trust in your data and scale with your needs.

- Metadata-driven rules & dimensions: We help you define quality rules once and enforce them consistently across systems. This ensures accuracy, completeness, timeliness and consistency are always aligned with business outcomes.

- Assessment & remediation: Through profiling, root-cause analysis and cleansing tactics, we identify and resolve data issues quickly. This approach accelerates remediation and speeds up value realization.

- Governance integration: Data quality works best when tied to data governance. We embed stewardship roles, governance councils, and policies into your program to ensure accountability across the enterprise.

- Near real-time observability: We establish alert thresholds, lineage graphs and monitoring dashboards. For example, we can flag an 80% drop in customer transactions and trace the issue back to the source system within minutes.

- Right-fit technology: As a vendor-neutral organization, we recommend and implement the tools that best suit your environment. This approach ensures your technology investments align with both your current stack and your long-term goals.

- Continuous improvement: Quality and observability are not one-time projects. We help you embed KPIs, iteration cycles and training programs to sustain momentum as your business evolves.

FSFP believes that sustainment ensures long-term success, not one-time wins. Implementing this framework sets the stage for building a scalable monitoring stack.

Building a Monitoring Stack: From Lineage to Alerting

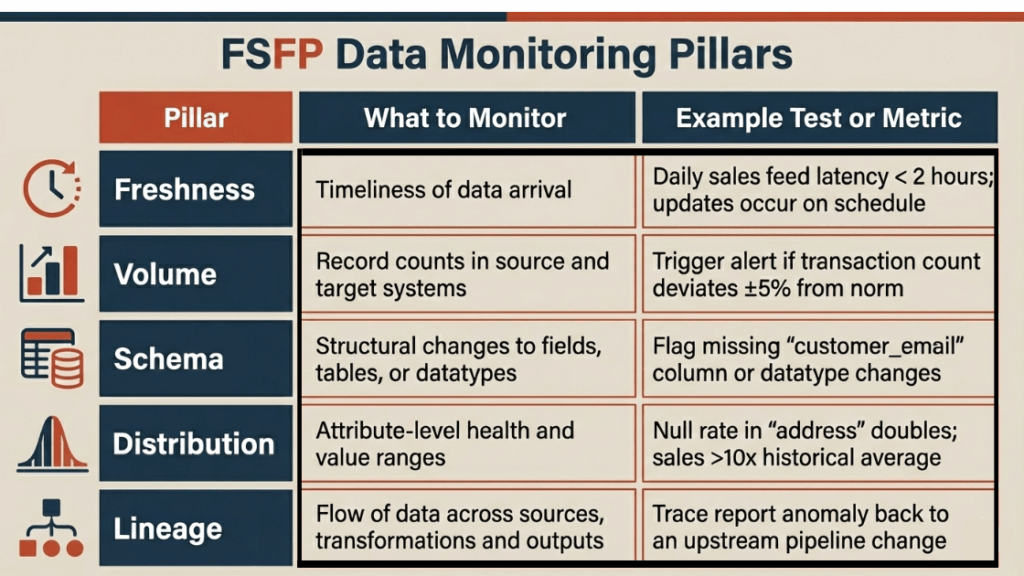

To make observability practical, you’ll want to build your monitoring stack around the five core pillars of data health. Each pillar offers a distinct perspective for identifying issues before they impact downstream users.

FSFP Data Monitoring Pillars

Popular platforms such as Monte Carlo, Datafold, and Collibra support these checks, but the best choice depends on your stack and maturity.

For more tooling insights, read our blog: How to Choose the Best Data Governance Tool for 2026.

Injecting Semantic Intelligence for Context-Aware Quality Checks

Semantic intelligence is the practice of connecting business meaning to your data, allowing quality rules to become context-aware and enforceable across systems.

For example, when you define “Customer” once and map it to every related data entity, you can apply rules consistently. This may include checking for duplicate IDs, ensuring that required attributes such as “customer_email” are populated, or validating that all records match the agreed-upon business definitions.

This isn’t theory alone. A systematic review of semantic technologies in electronic health records revealed that approaches such as controlled vocabularies, ontologies and knowledge graphs enhanced data accuracy, completeness and consistency. These methods also align with FAIR principles, making data findable, accessible, interoperable and reusable.

Accurate semantics are at the foundation of AI readiness, ensuring that generative outputs are consistent, explainable and trustworthy.

Harmonizing Salesforce Data & Preparing for Autonomous Agents

Salesforce environments often suffer from object sprawl, duplicate picklists and uncontrolled flows. FSFP’s vast understanding of Agentforce, helps our clients to safely bridge governance into automation for their organization's data frameworks.

Catch inconsistencies before your autonomous agents act on them with machine readable governance and observability in place to keep policies enforced, data clean and agents trustworthy.

FSFP can help you with a readiness assessment to prepare your Salesforce data for autonomous agents.

Talk to a data consulting expert today.

30-60-90-Day Quick-Start Roadmap

Getting started with data quality and observability does not have to be overwhelming. A phased roadmap helps you progress quickly and see results early:

- In 30 days: Inventory critical data assets, draft DQ SLAs and stand up basic monitoring

- In 60 days: Roll out observability alerts, integrate with governance council and pilot semantic tagging

- In 90 days: Automate RCA loops, measure lift in trust scores and prepare Salesforce data for agents

Ready to accelerate your data journey? Partner with FSFP to build trust, scale AI and make information actionable.

Array